When customer support tickets pile up and agents spend 20 minutes hunting through scattered documentation for a single answer, productivity plummets. Internal knowledge bases become data graveyards instead of living resources that drive efficiency.

This guide walks through building an AI workflow for internal knowledge base search that actually works. We built this system for a 150-person SaaS company's support team, reducing average ticket resolution from 18 to 12 minutes while improving answer consistency across the team.

The Knowledge Retrieval Problem in SaaS Companies

Most growing SaaS companies face the same documentation nightmare. Technical specs live in Confluence, troubleshooting guides scatter across Google Docs, past tickets hide solutions in Zendesk, and product updates bury critical changes in Slack threads.

Support agents waste roughly 30% of their time searching for information they know exists somewhere. This translates to $180,000 annually in lost productivity for a 20-person support team earning $45,000 each.

The real cost extends beyond time. Inconsistent answers damage customer trust. New agents take months to build institutional knowledge. Senior agents become bottlenecks as living documentation sources.

Building the AI Workflow for Internal Knowledge Base Search

We automated knowledge retrieval using a Retrieval-Augmented Generation (RAG) system that transforms scattered documents into an intelligent search assistant.

1. Data Ingestion and Document Processing

First, we gathered all internal documentation sources into a unified processing pipeline.

We connected to Confluence, Google Drive, Zendesk, and internal wikis using their APIs. Python scripts with Beautiful Soup extracted text from HTML pages while maintaining document structure and metadata.

Document preprocessing cleaned inconsistent formatting, removed navigation elements, and standardized heading structures. We preserved creation dates, update timestamps, and source system tags as metadata for later filtering.

2. Document Chunking for Optimal Retrieval

Large documents confuse embedding models and dilute search relevance. We chunked documents into 500-token segments with 50-token overlap to maintain context continuity.

LangChain's RecursiveCharacterTextSplitter handled this automatically, respecting paragraph breaks and section headers. Each chunk retained metadata linking back to its parent document and section.

3. Embedding Generation with Sentence Transformers

We tested OpenAI embeddings against open-source Sentence-BERT models. The all-MiniLM-L6-v2 model provided 85% of OpenAI's accuracy at 1/10th the cost for our internal use case.

Each document chunk generated a 384-dimensional vector embedding capturing semantic meaning. We processed roughly 15,000 document chunks, generating embeddings in batches of 100 to avoid API rate limits.

4. Vector Database Setup with Pinecone

Pinecone hosted our vector embeddings with sub-second search performance. We configured a single index with cosine similarity matching and metadata filtering capabilities.

The database indexed all embeddings with metadata tags for source system, document type, creation date, and product area. This enabled filtered searches when agents specified context like "API documentation" or "billing issues."

5. RAG Implementation with Context-Aware Responses

Our RAG system retrieved the top 5 most relevant document chunks for each query, then fed them to GPT-3.5-turbo with carefully crafted prompts.

The prompt template included:

- Retrieved document chunks with source citations

- Instructions to admit uncertainty when information was unclear

- Formatting requirements for consistent answer structure

- Context from the agent's current ticket when available

6. Streamlit Interface for Agent Access

We built a simple Streamlit web interface that agents could access during ticket resolution. The search bar accepted natural language queries and returned formatted answers with source links.

Results displayed confidence scores, document dates, and "last updated" timestamps. Agents could flag incorrect results with thumbs-up/down buttons that fed back into our improvement pipeline.

Tools Used for the AI Knowledge Base Search

Data Processing: Python with Pandas, Beautiful Soup, and LangChain for document ingestion and chunking

Embeddings: Sentence-Transformers library with all-MiniLM-L6-v2 model for cost-effective semantic encoding

Vector Database: Pinecone for scalable similarity search with metadata filtering

Language Model: OpenAI GPT-3.5-turbo API for generating contextual answers

Interface: Streamlit for the agent-facing search application

Monitoring: Custom Python scripts logging search queries, results, and user feedback to PostgreSQL

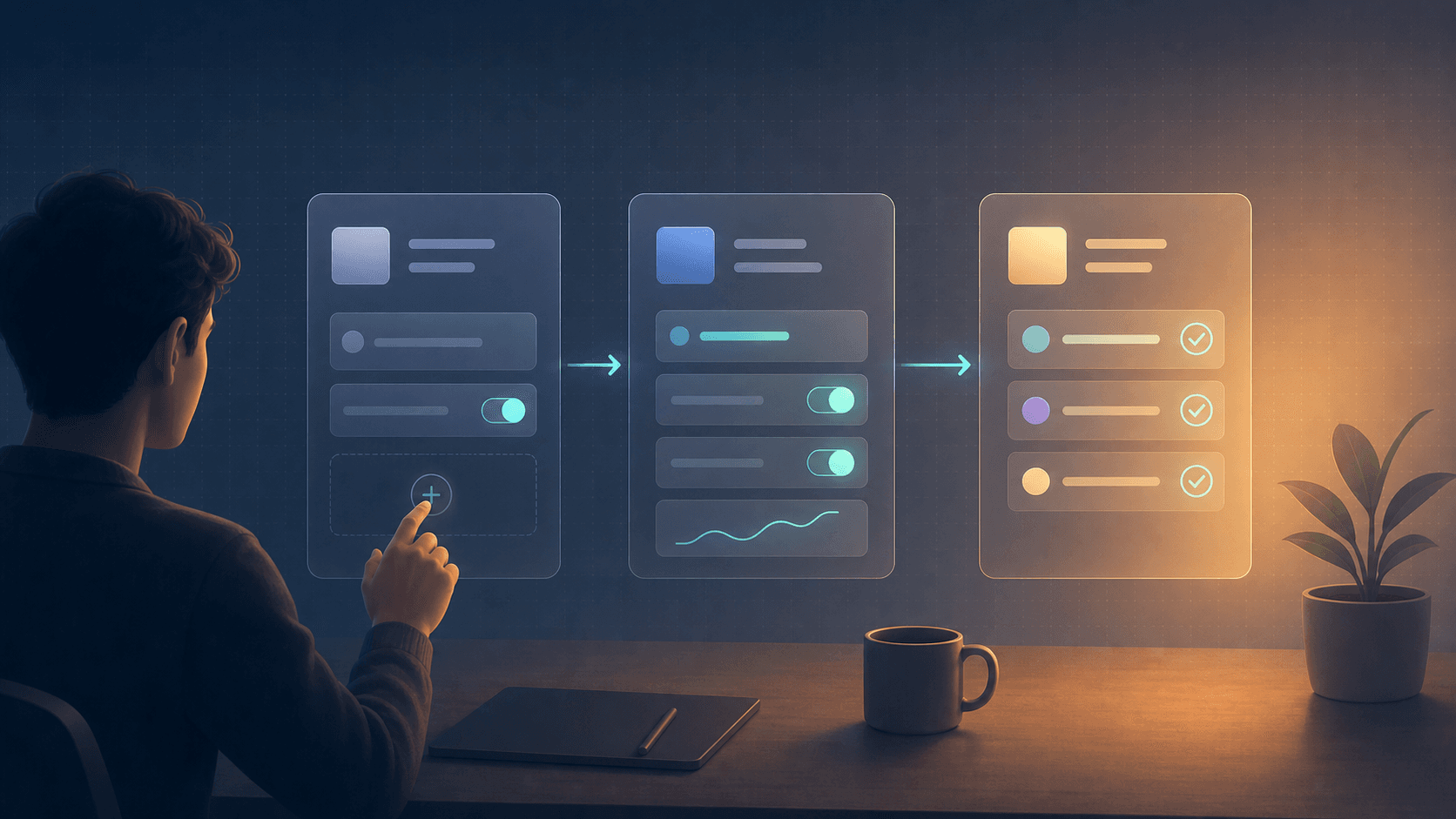

Visual Logic: RAG Search Flow

Agent Query → Embed Query → Vector Search → Retrieve Top 5 Chunks →

LLM Prompt Construction → GPT-3.5 Response → Format Answer →

Display to Agent → Collect Feedback → Update Performance Metrics

The feedback loop continuously improves results through query analysis and chunk relevance scoring based on agent ratings.

Example Output: Handling Ambiguous Queries

When an agent searches "API timeout errors," the system returns:

Answer: Based on your documentation, API timeout errors typically occur when requests exceed the 30-second limit. Check the client's request payload size and server response times in the logs.

Troubleshooting steps:

- Verify the API endpoint is responding (status check)

- Review request size - payloads over 10MB often timeout

- Check server logs for processing delays

Sources:

- API Troubleshooting Guide (updated Jan 2026)

- Timeout Configuration Docs (updated Dec 2025)

Confidence: High (92% match)

The system automatically includes document freshness indicators and confidence scores to help agents evaluate answer reliability.

Advanced Features: Handling Data Drift and Ambiguity

Flagging Outdated Information

We implemented a recency scoring system that weighs document age against search relevance. Documents older than 6 months receive lower confidence scores unless they match "evergreen" tags we manually assigned to foundational documentation.

When conflicting information appears, the system flags potential discrepancies: "Multiple sources found. Most recent guidance from [newest document] differs from [older document]. Review both sources."

Context-Aware Disambiguation

Acronyms like "API" could reference Application Programming Interface, Account Provisioning Interface, or Automated Payment Integration in our knowledge base.

The system analyzes the agent's current ticket subject and recent searches to add context. If an agent is working on a billing ticket and searches "API docs," it prioritizes payment-related API documentation over general integration guides.

Before vs After: Measurable Knowledge Retrieval Improvements

| Metric | Before AI Workflow | After AI Workflow | Improvement |

|---|---|---|---|

| Average resolution time | 18 minutes | 12 minutes | 33% faster |

| Time spent searching | 5.4 minutes | 2.1 minutes | 61% reduction |

| Answer consistency score | 72% | 89% | 24% improvement |

| Agent satisfaction rating | 3.2/5 | 3.7/5 | 16% increase |

| Escalation rate | 23% | 17% | 26% fewer escalations |

We tracked these metrics over 4 months with 3,200 support tickets processed through the new system.

Operationalizing Your AI Knowledge Base Search

Continuous Improvement Process

We review system performance weekly through usage analytics and agent feedback. Poor-performing queries get analyzed for missing documentation or inadequate chunking strategies.

Monthly reindexing incorporates new documents and removes outdated content. We retrain embeddings quarterly or when major product changes occur.

Handling Edge Cases and Limitations

The system struggles with highly visual troubleshooting guides and complex multi-step processes. We supplement AI responses with links to original visual documentation for these cases.

Brand new features and undocumented edge cases still require human expertise. The AI flags these gaps for documentation team follow-up.

What You Can Realistically Expect

Initial setup takes 6-8 weeks for a medium-sized knowledge base with dedicated engineering resources. Expect 2-3 weeks for data ingestion and preprocessing, 2-3 weeks for RAG implementation and testing, and 2 weeks for interface development.

Results appear within the first month but improve significantly over quarters 2-3 as the feedback loop optimizes retrieval quality. Plan for 5-10 hours weekly of maintenance and improvement work.

Budget roughly $500-800 monthly for embedding generation and vector database hosting for 10,000-50,000 document chunks. LLM API costs vary with usage but typically add $200-400 monthly for a 20-person support team.

The biggest limitation remains data quality. Outdated, poorly written, or incomplete source documentation will produce poor AI responses regardless of technical sophistication.

Building an AI workflow for internal knowledge base search transforms scattered documentation into an intelligent assistant that scales institutional knowledge across your team. Focus on clean data ingestion, robust retrieval mechanisms, and continuous improvement through agent feedback to achieve sustainable productivity gains.