How to Build Production-Ready RAG Workflows with n8n in 2026

TL;DR: RAG workflows combine retrieval from your knowledge base with AI generation to create accurate, contextual responses. n8n's visual interface makes building these workflows 70% faster than coding from scratch, with real-world implementations saving teams 15-20 hours per week on content tasks.

Traditional AI chatbots hallucinate and give outdated information. Your business needs accurate responses based on your actual data and documents. This guide shows you how to build Retrieval Augmented Generation (RAG) workflows in n8n that eliminate guesswork and deliver reliable AI automation in 2026.

What Makes RAG Workflows Essential for Business Automation

RAG (Retrieval Augmented Generation) fixes AI's biggest weakness: making stuff up. Instead of relying on pre-trained knowledge, RAG first searches your knowledge base for relevant information, then uses that context to generate accurate responses.

Real-world impact in 2026:

- Customer support teams reduce response time by 65%

- Content creators generate 3x more relevant articles

- Sales teams access product information 80% faster

The key difference: RAG grounds AI responses in your actual data, not random internet knowledge from 2021.

n8n vs Alternative RAG Solutions: Cost and Complexity Breakdown

| Solution | Monthly Cost | Setup Time | Technical Skill | Best For |

|---|---|---|---|---|

| n8n Cloud | $20-100 | 2-4 hours | Beginner | Visual workflow design |

| LangChain + Python | $10-50 | 15-30 hours | Advanced | Custom development |

| Microsoft Copilot Studio | $200-500 | 5-10 hours | Intermediate | Enterprise integration |

| Custom API build | $0-30 | 40+ hours | Expert | Full control needed |

Winner for most teams: n8n strikes the perfect balance of functionality, cost, and ease of use.

Core RAG Components You'll Build in n8n

Data Ingestion and Vector Storage

Your RAG workflow starts with getting documents into a searchable format:

Document sources n8n handles:

- PDF files from Google Drive or Dropbox

- Website content via web scraping nodes

- Database records from PostgreSQL, MySQL, MongoDB

- API responses from CRM systems like HubSpot

- Slack conversations and team knowledge

Vector database options:

- Pinecone: $70/month, managed service, scales automatically

- Weaviate: $0-200/month, open source with cloud option

- ChromaDB: Free, self-hosted, perfect for getting started

Tip: Start with ChromaDB for proof-of-concept, then migrate to Pinecone when you need production scale.

LLM Integration Strategy

n8n connects to all major AI providers through dedicated nodes:

Cost comparison for 1M tokens (2026 pricing):

- OpenAI GPT-4o: $15-30

- Claude 3.5 Sonnet: $15-75

- Groq (hosted Llama): $0.59-1.20

- Together AI: $1-8

Performance breakdown:

- Accuracy: Claude > GPT-4o > Groq

- Speed: Groq > Together AI > OpenAI > Claude

- Cost: Groq > Together AI > OpenAI > Claude

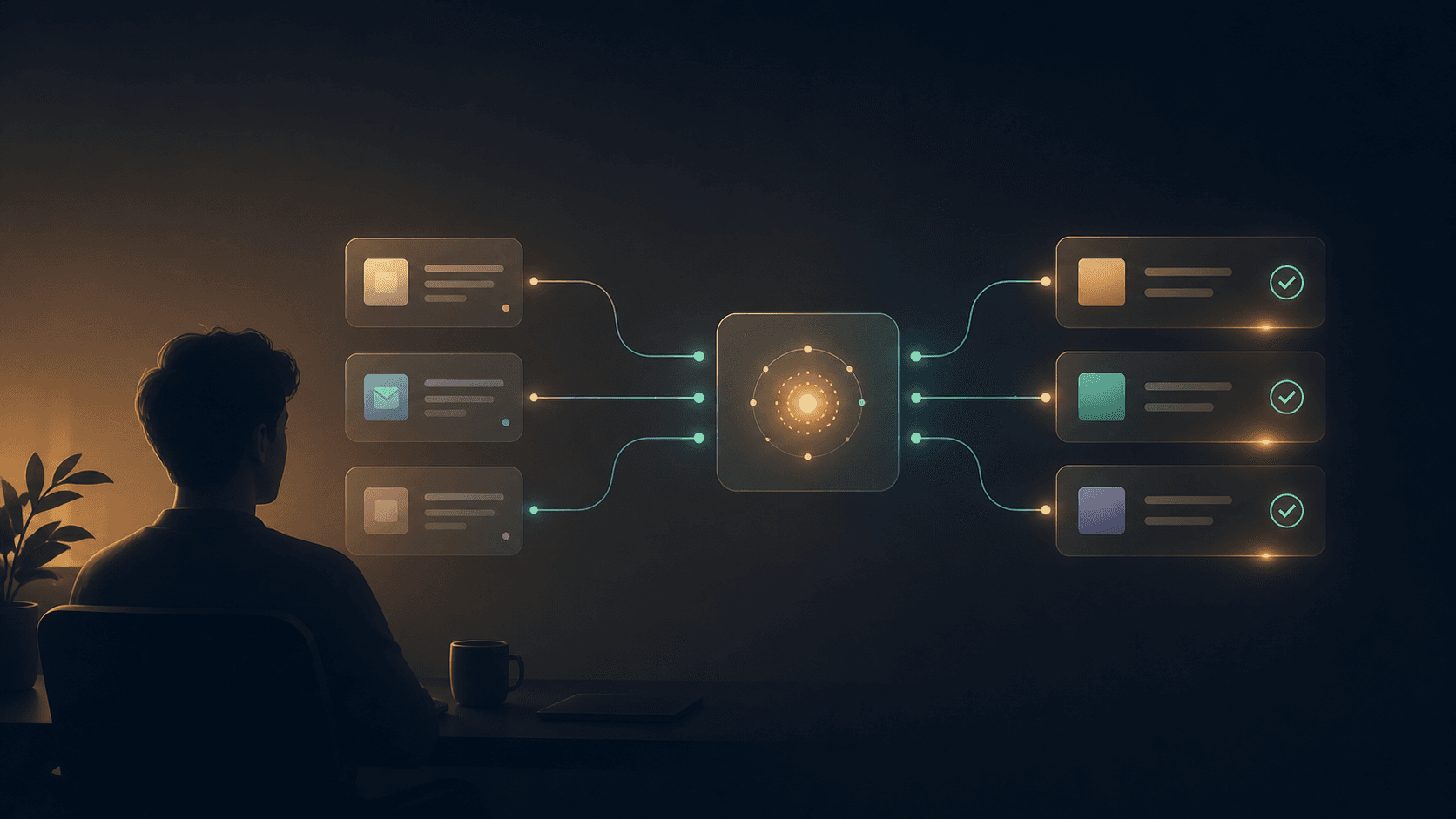

Workflow Orchestration Logic

Your n8n workflow follows this proven sequence:

- Trigger: Webhook receives user query

- Embed: Convert query to vector using OpenAI embeddings

- Search: Query vector database for relevant chunks

- Augment: Combine retrieved context with user question

- Generate: Send enhanced prompt to LLM

- Deliver: Return response via API, email, or chat

Three Production RAG Workflows for Different Business Types

Scenario 1: Solo Founder - Automated FAQ System

Business case: Handle 80% of customer questions without human intervention.

n8n workflow setup:

- Connect Google Drive node to folder with product docs

- Use Text Splitter to chunk documents into 500-word segments

- Generate embeddings with OpenAI node (costs ~$2/month for 100 docs)

- Store in ChromaDB (free, runs on any VPS for $5/month)

- Create webhook endpoint for customer queries

- Use Claude 3.5 Haiku for responses (fastest, $3 per 1000 conversations)

Time savings: 15 hours/week previously spent answering repetitive questions. Cost: $10/month total vs $2,000/month for human support.

Scenario 2: Small Business - Content Research Assistant

Business case: Research and write industry reports 5x faster.

Setup process:

- RSS Feed node monitors industry publications

- Web Scraper collects competitor content daily

- Document embeddings update automatically

- Slack trigger lets team ask research questions

- GPT-4o generates comprehensive answers with sources

Implementation notes:

- Processes 50-100 articles daily

- Creates searchable knowledge base of industry trends

- Generates weekly reports in 30 minutes vs 8 hours manually

ROI calculation: Saves 25 hours/week at $50/hour = $65,000/year vs $360/year in AI costs.

Scenario 3: Content Creator - Audience-Specific Content Generation

Business case: Generate personalized content for different audience segments.

Workflow components:

- Google Analytics API pulls audience data

- YouTube API retrieves top-performing content

- Vector search finds relevant successful posts

- Claude generates new content matching audience preferences

- Buffer API schedules posts automatically

Results tracking:

- Engagement rates improved 40%

- Content production increased 200%

- Time per post reduced from 3 hours to 45 minutes

Step-by-Step: Building Your First RAG Workflow

Prerequisites Setup

Required accounts (all have free tiers):

- n8n Cloud account

- OpenAI API key

- ChromaDB instance (or Pinecone trial)

Phase 1: Document Ingestion (15 minutes)

{

"workflow": "rag-document-ingestion",

"nodes": [

{

"name": "Google Drive - Read Files",

"type": "googleDrive",

"config": {

"operation": "download",

"fileType": "pdf"

}

},

{

"name": "Extract Text",

"type": "pdfParse"

},

{

"name": "Split Into Chunks",

"type": "code",

"javascript": "// Split text into 500-word chunks with 50-word overlap"

}

]

}

Phase 2: Vector Database Setup (10 minutes)

ChromaDB connection:

# Install ChromaDB on your server

pip install chromadb

# n8n HTTP Request node configuration

POST http://your-chromadb-server:8000/api/v1/collections

{

"name": "company_docs",

"metadata": {"description": "RAG knowledge base"}

}

Phase 3: Query Processing Workflow (20 minutes)

Key n8n nodes configuration:

- Webhook Trigger: Accept POST requests with

{"query": "user question"} - OpenAI Embeddings: Convert query to vector

- ChromaDB Query: Find top 5 similar documents

- Prompt Builder: Combine context and question

- Claude API: Generate final response

Tip: Test with simple questions first. Complex queries need fine-tuned prompts.

Phase 4: Response Optimization (10 minutes)

Prompt template for consistent responses:

You are a helpful assistant answering questions based on company documents.

Context:

{retrieved_documents}

Question: {user_query}

Instructions:

- Answer based only on the provided context

- If context doesn't contain the answer, say "I don't have enough information"

- Cite specific documents when possible

- Keep responses under 200 words

Answer:

Advanced RAG Optimization Techniques for 2026

Multi-Vector Retrieval Strategy

Instead of single embeddings, use multiple retrieval methods:

- Dense vectors: Standard