How to Chain AI Models Together for Complex Automation (2026 Guide)

TL;DR: Single AI models can only do one thing well. By chaining multiple models together, you create powerful workflows that analyze images, process text, and generate content automatically. This guide shows you three practical setups with real tools and code examples.

Most AI tools solve single problems—one analyzes images, another summarizes text, another generates content. But real business problems require multiple AI capabilities working together seamlessly.

This creates a bottleneck where you're constantly copying outputs between different AI services. You waste time, lose context, and can't build truly automated workflows.

This guide shows you how to chain AI models together using tools like n8n, LangChain, and Python to create powerful automation pipelines that handle complex multi-step tasks.

What Is AI Model Chaining and Why It Matters

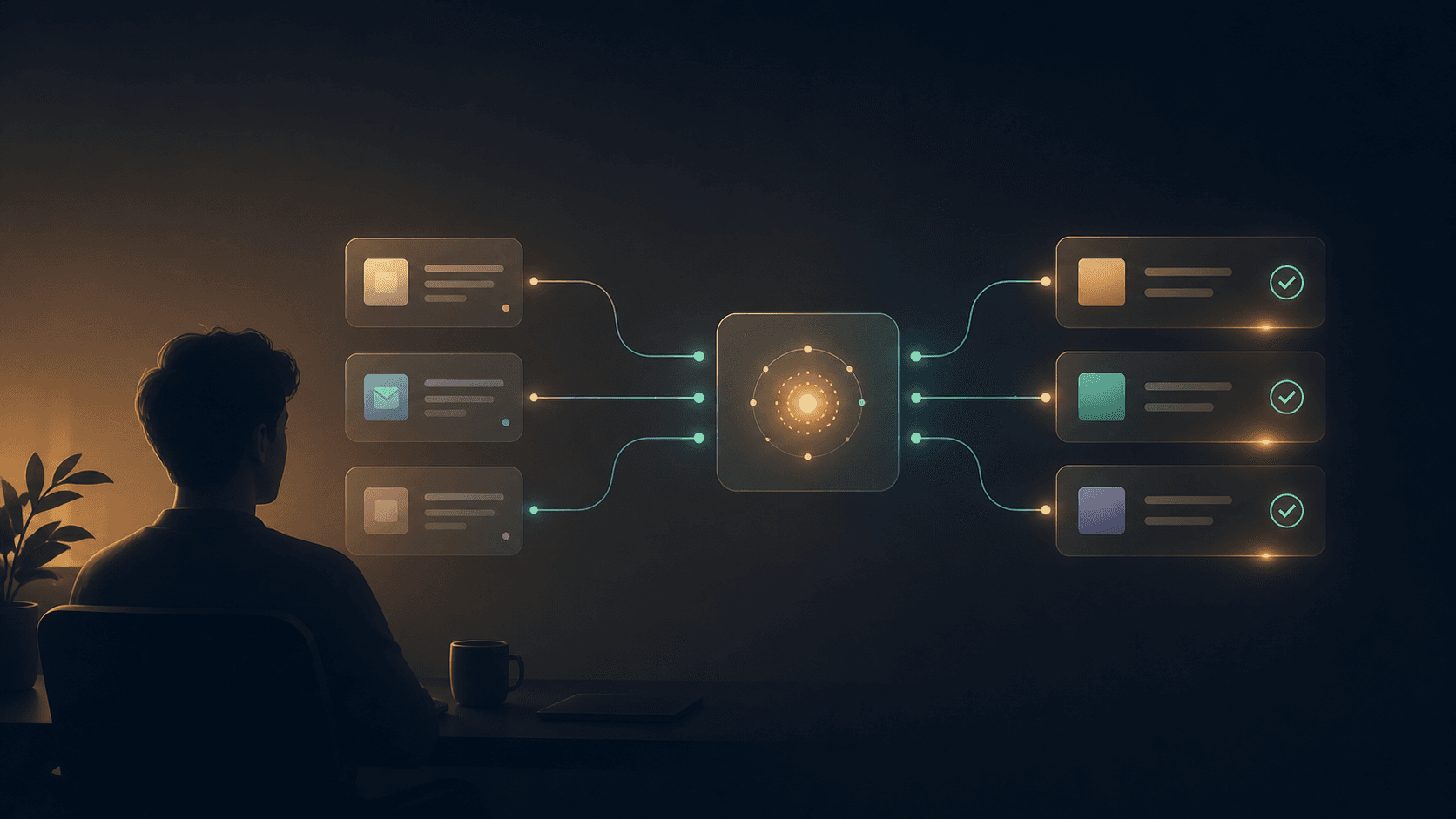

AI model chaining connects multiple specialized AI models to process data through a sequence of steps. Instead of using one model that's mediocre at everything, you use several models that excel at specific tasks.

Think of it like an assembly line. One model extracts text from images, another analyzes sentiment, and a third generates a response based on both inputs.

Here's why this approach works better in 2026:

- Specialized performance: Each model does what it's best at

- Cost efficiency: Use cheaper models for simple tasks, premium ones for complex reasoning

- Flexibility: Swap models in and out without rebuilding your entire system

- Scalability: Add new capabilities by adding new models to the chain

Three Practical AI Chaining Scenarios

Scenario 1: Solo Founder - Social Media Content Pipeline

Your situation: You run a small business and need to create social media posts from customer photos and feedback.

The chain:

- Image analysis model identifies products/scenes

- Sentiment analysis processes customer comments

- Content generation model creates engaging posts

Time savings: 3 hours of manual work → 10 minutes automated

Cost: ~$15/month for API calls (processing 100 posts)

# Basic workflow structure

def process_social_post(image_path, customer_comment):

# Step 1: Analyze image

image_description = vision_model.analyze(image_path)

# Step 2: Analyze sentiment

sentiment = sentiment_model.analyze(customer_comment)

# Step 3: Generate post

post = content_model.generate({

'image_context': image_description,

'customer_sentiment': sentiment,

'tone': 'engaging'

})

return post

Scenario 2: Small Business - Customer Support Automation

Your situation: You receive 50+ support emails daily and need intelligent routing and initial responses.

The chain:

- Intent classification determines issue type

- Entity extraction finds order numbers, dates, products

- Knowledge base search retrieves relevant info

- Response generation creates personalized replies

Time savings: 4 hours daily → 30 minutes of review time

Cost: ~$40/month for API usage

Scenario 3: Content Creator - Video Analysis and Optimization

Your situation: You create educational videos and need to automatically generate descriptions, tags, and social clips.

The chain:

- Speech-to-text extracts audio content

- Key topic extraction identifies main themes

- Thumbnail analysis evaluates visual appeal

- Content optimization suggests improvements

Time savings: 2 hours per video → 15 minutes

Cost: ~$25/month for processing 20 videos

| Setup Type | Monthly Cost | Time Investment | Difficulty Level | Quality Output |

|---|---|---|---|---|

| Solo Founder Social Pipeline | $15 | 2 hours setup | Beginner | Good |

| Small Business Support | $40 | 4 hours setup | Intermediate | Excellent |

| Content Creator Video | $25 | 3 hours setup | Intermediate | Very Good |

Essential Tools for Model Chaining in 2026

Visual Workflow Tools

n8n remains the top choice for building AI workflows without heavy coding. It connects to most AI APIs and handles data transformation between models.

Zapier works for simple chains but gets expensive with complex workflows. Better for basic automation.

Microsoft Power Automate excels in corporate environments with strong Office 365 integration.

Tip: Start with n8n's free self-hosted version to test your workflows before scaling up.

AI API Services

OpenAI API (GPT-4, DALL-E, Whisper): Premium quality but higher costs Anthropic Claude: Excellent for reasoning and analysis tasks Groq: Lightning-fast inference for real-time applications Hugging Face: Free and open-source models with decent quality

Programming Frameworks

LangChain simplifies building complex AI applications with pre-built connectors and memory management.

Semantic Kernel (Microsoft) offers enterprise-grade orchestration with strong .NET integration.

Custom Python scripts give you complete control but require more development time.

Step-by-Step: Building Your First AI Chain

Let's build a document analysis pipeline that processes uploaded PDFs through multiple AI models.

Step 1: Set Up Your Environment

# Install required packages

pip install langchain openai python-dotenv PyPDF2

# Create environment file

echo "OPENAI_API_KEY=your_key_here" > .env

Step 2: Create the Model Chain

from langchain.document_loaders import PyPDFLoader

from langchain.text_splitter import CharacterTextSplitter

from langchain.llms import OpenAI

from langchain.chains import AnalyzeDocumentChain

from langchain.chains.summarize import load_summarize_chain

# Initialize models

llm = OpenAI(temperature=0)

text_splitter = CharacterTextSplitter(chunk_size=1000, chunk_overlap=0)

# Step 1: Load and split document

def process_document(pdf_path):

loader = PyPDFLoader(pdf_path)

docs = loader.load()

# Step 2: Summarize content

summary_chain = load_summarize_chain(llm, chain_type="map_reduce")

summary = summary_chain.run(docs)

# Step 3: Extract key insights

insight_prompt = f"Extract 3 key business insights from: {summary}"

insights = llm(insight_prompt)

return {

'summary': summary,

'insights': insights

}

Step 3: Add Error Handling and Monitoring

import logging

from datetime import datetime

def robust_process_document(pdf_path):

try:

logging.info(f"Processing document: {pdf_path}")

result = process_document(pdf_path)

# Log success metrics

logging.info(f"Processed successfully at {datetime.now()}")

return result

except Exception as e:

logging.error(f"Processing failed: {str(e)}")

return {"error": "Processing failed", "details": str(e)}

Tip: Always test with small documents first. PDF processing can be memory-intensive with large files.

Common Challenges and How to Solve Them

Data Format Mismatches

Different models expect different input formats. The image model wants base64, but the text model needs plain strings.

Solution: Create standardized data transformation functions between each step.

def standardize_output(model_output, target_format):

if target_format == "text" and isinstance(model_output, dict):

return model_output.get('content', str(model_output))

elif target_format == "json" and isinstance(model_output, str):

return {"content": model_output}

return model_output

API Rate Limiting

Chaining multiple API calls can quickly hit rate limits, especially with free tiers.

Solution: Implement exponential backoff and consider model alternatives for high-volume processing.

import time

import random

def api_call_with_retry(api_function, max_retries=3):

for attempt in range(max_retries):

try:

return api_function()

except RateLimitError:

wait_time = (2 ** attempt) +