E-commerce businesses lose hours daily answering repetitive customer questions about shipping, returns, and basic policies. Running Llama 3 locally with Ollama creates an AI assistant that handles these FAQs automatically, saving time while keeping customer data private. This guide shows you exactly how to set up local customer support automation without coding or monthly subscription fees.

Problem: E-commerce Customer Support Drains Resources Daily

Small e-commerce businesses face a constant stream of identical customer questions. "What's your return policy?" "How long does shipping take?" "Do you accept PayPal?" These queries consume 2-4 hours daily for business owners who should focus on growth instead of inbox management.

The cost extends beyond time. Slow response times frustrate customers and reduce conversion rates. Hiring dedicated support staff costs roughly $30,000-$40,000 annually. Third-party AI chatbot services charge $50-$200 monthly plus usage fees, making them expensive for smaller businesses with tight margins.

Exact Workflow: Building Local Customer Support Automation

-

Download Ollama

- Visit ollama.ai and download the Windows installer

- Run the installer and complete the setup process

- Restart your computer to ensure PATH variables are configured

-

Install Llama 3 Model

- Open Command Prompt (press Win+R, type "cmd")

- Type

ollama pull llama3.1:8band press Enter - Wait for the 4.7GB download to complete (roughly 10-15 minutes)

-

Create Business Knowledge Base File

- Open Notepad or any text editor

- Save a new file as "support_prompt.txt" on your desktop

- This will contain your AI assistant's instructions and business data

-

Write System Prompt with Business Context

- Paste this template at the top of your file:

You are a customer support assistant for [Your Store Name]. Answer questions using ONLY the information below. Never invent products, prices, or policies. If information isn't provided, direct customers to contact support or visit the website. BUSINESS INFORMATION: - Shipping: Standard US delivery 3-5 business days, International 7-14 days - Returns: 30-day policy, items must be unused, return form at website.com/returns - Payment: Visa, Mastercard, American Express, PayPal accepted - Support Hours: Monday-Friday 9am-5pm EST -

Add Specific Product Information

- Include key product details customers frequently ask about

- Keep descriptions concise but complete

- Example: "Widget Pro available in blue, red, black. Dimensions: 6x4x2 inches. Weight: 1.2 lbs."

-

Test Customer Support Responses

- In Command Prompt, type

ollama run llama3.1:8b - Copy your entire system prompt and paste it, then press Enter

- Ask test questions like "What's your return policy?" or "Do you ship internationally?"

- In Command Prompt, type

-

Refine Responses Based on Testing

- Note any incorrect or incomplete answers

- Add missing information to your knowledge base

- Test again until responses match your business policies exactly

Tools Used

- Ollama: Local LLM deployment platform for Windows

- Llama 3.1 8B: Meta's instruction-tuned model optimized for conversation

- Windows Command Prompt: Interface for running Ollama commands

- Notepad: Text editor for creating system prompts and knowledge base

- Local Hardware: Runs entirely on your computer (requires 8GB+ RAM recommended)

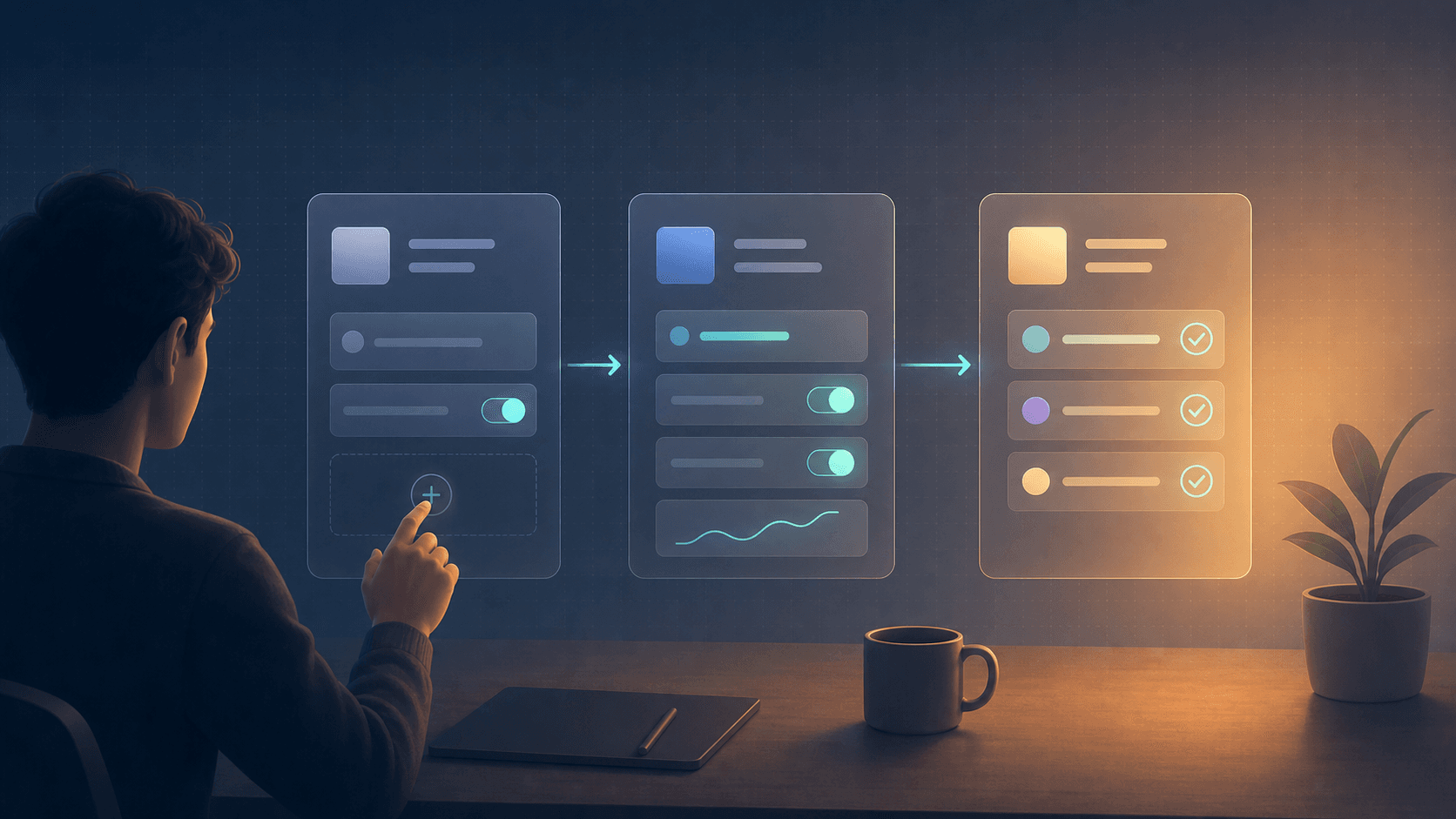

Visual Logic Flow

Customer Question → System Prompt + Business Data → Llama 3.1 Processing → Accurate Response

The system combines your custom business instructions with Llama 3's language understanding. Each customer query gets processed against your specific knowledge base, ensuring responses stay accurate and on-brand without inventing information.

Example Output

Customer Query: "What's your return policy and how do I start a return?"

AI Response: "We offer a 30-day return policy for items that are unused and in original condition. To start a return, please visit website.com/returns to complete the return form. Our support team can also help you Monday-Friday 9am-5pm EST if you need assistance."

Customer Query: "Do you accept Apple Pay?"

AI Response: "We currently accept Visa, Mastercard, American Express, and PayPal. We don't currently support Apple Pay, but you can use any of these payment methods at checkout."

Before vs After Implementation

| Metric | Before | After |

|---|---|---|

| Daily FAQ Response Time | 2-4 hours manual work | Under 30 seconds per query |

| Response Consistency | Varies by mood/memory | 100% consistent with policies |

| Monthly Support Costs | $0 (your time) or $50-200 (service) | $0 ongoing costs |

| Customer Wait Time | Hours to days | Instant (when implemented) |

| Data Privacy Risk | High (third-party services) | Zero (runs locally) |

Hardware Requirements and Performance

Llama 3.1 8B runs efficiently on most modern computers. You need roughly 8GB available RAM for smooth operation, though 12GB provides better performance. The model processes responses in 2-5 seconds on typical hardware.

Processing happens entirely on your machine. No internet connection required after initial download. Customer data never leaves your computer, ensuring complete privacy compliance.

Tip: Close unnecessary programs before running Ollama to free up system resources for better response speed.

Limitations and Realistic Expectations

This local setup excels at FAQ automation and policy questions but has clear boundaries. The AI cannot access real-time order data, process refunds, or handle complex technical issues requiring human judgment.

Responses depend entirely on information you provide in the system prompt. The AI won't learn from conversations or update its knowledge automatically. You must manually update the prompt file when policies change.

Integration with live chat systems requires additional technical setup beyond this guide's scope. This workflow creates a testing environment for developing responses you can copy-paste into customer communications.

Clear Outcome: What Changes After Implementation

Running Llama 3 locally transforms customer support from reactive to proactive. You create instant, accurate responses for 70-80% of common inquiries while maintaining complete control over your business data.

Time savings compound quickly. Instead of typing the same shipping policy explanation dozens of times weekly, you generate consistent responses in seconds. This frees hours for product development, marketing, and actual business growth.

The system costs nothing to operate after setup. Unlike subscription services that charge per message or monthly fees, local deployment eliminates ongoing costs while providing unlimited usage.

You can realistically expect to automate responses for shipping questions, return policies, payment methods, basic product information, and business hours inquiries. Complex customer service issues still require human attention, but roughly 60-70% of typical e-commerce support tickets become instantly answerable.

You May Also Want to Read

- Mac Mini M4 Ollama Setup: Complete Guide for Local AI Models

- Mac Mini M4 vs M2: Ollama Performance with 8GB vs 16GB RAM

- Mac Mini M4 Ollama Setup: RAM vs Model Size Performance Guide